Introduction

Moisture is one of the most underestimated variables in steel manufacturing. Trace amounts of water vapor in furnace atmospheres or raw materials can trigger surface defects, structural weakening, and costly scrap—yet many operations monitor moisture inconsistently or not at all. A 2022 electric arc furnace incident at TimkenSteel resulted in one fatality and severe injuries when water became encapsulated in molten metal, underscoring the catastrophic consequences of inadequate moisture control.

That risk extends across every stage of production. Effective moisture measurement spans raw material handling and sintering, heat treatment and bright annealing, casting, and final product finishing — and choosing the right technique at each stage directly determines product quality, operational efficiency, and worker safety.

Even seemingly minor deviations can have real consequences. Moisture in blanketing gases exceeding threshold by just a few ppmv, for example, can cause visible oxidation defects that render automotive strip nonconformant.

TL;DR

- Moisture affects steel quality at multiple process stages: raw material handling, sintering, heat treatment, and casting

- Techniques range from dew point analyzers and TDLAS for gas-phase measurement to NIR and microwave sensors for solid materials — each suited to different process conditions

- The right technique depends on process stage, temperature conditions, and required measurement accuracy

- Integrating real-time moisture data into process control reduces defects and improves furnace efficiency

What Is Moisture Measurement in Steel Manufacturing?

Moisture measurement in steelmaking is the continuous or periodic tracking of water vapor (in process gases and atmospheres) or water content (in solid materials) at critical points across production. Results are expressed in units such as dew point temperature (°C/°F dp), parts per million by volume (ppmv), or percentage moisture content (%MC).

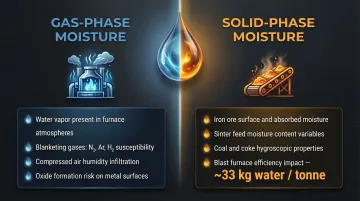

The steel industry faces two distinct moisture measurement challenges:

- Gas-phase moisture — Water vapor in furnace atmospheres, blanketing gases (nitrogen, argon, hydrogen), and compressed air systems. Left uncontrolled, it reacts with hot steel surfaces to form oxides that degrade finish and mechanical properties.

- Solid-phase moisture — Water content in iron ore, sinter feed, coal, and other raw materials. A charge with 1% ore burden moisture and 5% coke moisture introduces approximately 33 kg of water per tonne of hot metal into the blast furnace, driving up coke consumption and cutting productivity.

Measurement approaches range from laboratory-based reference methods (Karl Fischer titration) to continuous online analyzers, and from contact-based sensors to fully contactless systems. In practice, the right choice hinges on whether real-time process feedback is needed or whether periodic spot-checks are sufficient — a distinction that separates online analyzers from lab methods and shapes every other decision that follows.

Why Moisture Control Is Critical in Steel Production

Surface Oxidation and Heat Treatment Rejection Rates

In heat treatment and bright annealing, even small deviations in blanketing gas moisture cause water vapor to react with hot steel surfaces, forming undesirable metal-oxide coatings. For common stainless steel alloys, maintaining a dew point of -40°C or lower is required to prevent chromium oxide (Cr₂O₃) formation during processing at temperatures above 1010°C. When moisture exceeds specification, these oxide layers degrade surface finish, alter mechanical properties, and can render entire batches nonconformant.

Safety Risks and Blast Furnace Efficiency

Excess moisture in raw materials (ore, sinter, pellets) reduces blast furnace efficiency and increases coke consumption. Moisture serves as thermal ballast, requiring additional heat absorption for evaporation and occupying furnace volume. Wet materials contacting molten iron can also trigger dangerous steam explosions — the 2022 TimkenSteel fatality showed directly what inadequate moisture monitoring can cost.

Operational Benefits of Real-Time Moisture Data

Accurate, real-time moisture measurement enables measurable gains across the production floor:

- Maintains consistent atmospheric conditions, tightening thickness and quality margins

- Optimizes moisture dosing in real time, cutting energy lost to over-drying and re-processing

- Prevents oxidation-related scrap, improving first-pass yield

Together, these improvements reduce both operating costs and material waste — two pressures every steel producer is managing.

Key Moisture Measurement Techniques for the Steel Industry

No single technique covers all steel process environments. Selecting the right approach depends on several factors:

- Temperature range and process phase (gas vs. solid)

- Contaminant presence, including particulates and acid gases

- Required response speed (continuous online vs. spot-check)

- Whether absolute concentration (ppmv) or dew point output is needed

Dew Point Analyzers (Chilled Mirror and Capacitive/Aluminum Oxide)

Chilled mirror (optical) hygrometers are the most fundamental and accurate gas-phase moisture technology. They cool a metallic mirror until condensation forms and measure the exact dew point temperature—accuracy typically reaches ±0.1°C dp, making them a reference standard for furnace atmosphere qualification and calibration applications in steel mills.

Capacitive/aluminum oxide sensors offer a more economical, fast-response alternative with accuracy ~±2°C dp, suitable for general compressed air and gas stream monitoring. They require more frequent recalibration in harsh environments—typically every 12-24 months under standard conditions, but more often when exposed to polluted or contaminated industrial atmospheres.

Tunable Diode Laser Absorption Spectroscopy (TDLAS)

TDLAS is the preferred technique for continuous moisture measurement in the harshest steel environments, such as hot form hardening furnaces, where high particulate levels, acid gases, and extreme temperatures cause electrochemical and optical sensors to drift, contaminate, or fail.

TDLAS uses laser absorption of specific water vapor wavelengths in a backscatter configuration with no active optical components in the gas stream. This design makes it effectively immune to particulate interference. A 2002 field trial at an SSAB reheating furnace demonstrated simultaneous, contact-free monitoring with sub-second response times, outperforming conventional extractive analyzers by a significant margin.

Quartz Crystal Microbalance (QCM)

QCM analyzers are well-suited for continuous monitoring of moisture in blanketing gases (nitrogen, argon, hydrogen) fed to heat treatment ovens. The analyzer takes a sample from the gas line before the furnace entry point, providing a direct concentration measurement (ppmv) rather than dew point.

The Michell QMA601 specifies measurement range of 0.1 to 2000 ppmv with accuracy of ±0.1 ppmv at low concentrations. Concentration-based parameters allow tighter moisture specifications and greater operator confidence versus pressure-dependent dew point readings. This matters most in systems where pressure fluctuates during operation.

NIR and Microwave Sensors for Solid Materials

Near-infrared (NIR) and microwave/radio-frequency (RF) methods are the primary approaches for measuring moisture in solid raw materials—iron ore, sinter feed, pellets, coal, and coke.

NIR sensors:

- Measure absorption of specific infrared wavelengths (1400-1450 nm and 1900-1940 nm) by surface water molecules

- Best for conveyor belt monitoring where surface moisture is representative

- Limited to surface measurement—do not penetrate bulk material

Microwave/RF sensors:

- Utilize water's high dielectric constant to measure phase shift and attenuation

- Penetrate deep into bulk materials for volumetric moisture content

- Require compensation from belt weigher or density measurement

Both can be installed as non-contact sensors on conveyor belts for real-time inline measurement without process disruption.

Karl Fischer Titration (Laboratory Reference Method)

Karl Fischer titration is the definitive laboratory method for measuring trace moisture in solid or liquid samples, providing the highest accuracy and often used to calibrate or validate online sensors.

Its limitation: it is a destructive, batch method unsuitable for continuous process control, making it a quality assurance tool rather than an operational monitoring solution.

Where to Measure: Key Stages in the Steel Production Process

Effective moisture management requires measurement at multiple points along the production chain, not just at one location. The choice of technology shifts at each stage based on operating conditions.

Raw Material Receiving and Storage

Moisture content in incoming iron ore, coal, and sinter feed must be measured on arrival and during storage. Moisture variations affect:

- Weighing accuracy for charge calculations

- Blast furnace material balance

- Logistics and handling characteristics

Recommended approach: NIR or microwave online sensors on conveyor belts for continuous monitoring, with Karl Fischer titration for incoming batch validation.

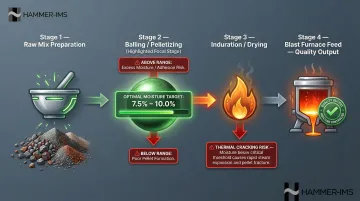

Sintering and Pelletizing

Optimal green pellet moisture is critical for pellet mechanical strength and reduction efficiency in the blast furnace. Green pellets require strict moisture control (7.5%–10.0%)—too wet and pellets disintegrate; too dry and they crack during thermal processing.

Thermal cracking occurs if the pellet's mechanical strength cannot endure inner vapor pressure generated by evaporating moisture during induration. Inline moisture sensors (NIR or microwave) on the mixing/balling stage enable closed-loop moisture dosing control, directly improving pellet quality consistency.

Heat Treatment Furnaces and Bright Annealing Lines

This is the most measurement-intensive stage. Moisture in blanketing gases (nitrogen, argon, hydrogen) must be continuously monitored upstream of furnace entry to prevent oxidation.

Recommended technologies:

- TDLAS for harsh furnace environments with particulates and acid gases

- QCM or chilled mirror analyzers for cleaner controlled-atmosphere lines

- Dew point transmitters for compressed air instrumentation

Sampling systems require appropriate filtration, tubing, and anti-diffusion coils to protect sensors and deliver representative measurements. Heated sample lines must maintain tube temperature above dew point to prevent condensation during gas transport.

Casting and Final Product Handling

In casting, moisture in molds, ladle linings, and the surrounding atmosphere can cause gas porosity (hydrogen pickup) in the final product. Liquid iron can reduce water to hydrogen — at low oxygen concentrations, steel picks up dissolved hydrogen from humid atmospheres, producing "fish-eye" defects and reduced ductility.

Direct inline moisture measurement is less common at this stage. The practical approach is continuous dew point and humidity monitoring at key locations:

- Mold preparation and drying areas

- Ladle lining storage and preheating zones

- Ambient atmosphere around the casting floor

Moisture Measurement in Practice: A Steel Plant Walkthrough

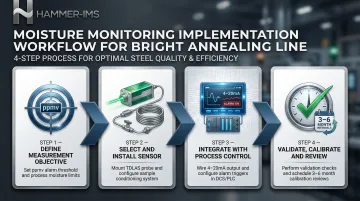

Consider a steel plant running a continuous bright annealing line for flat-rolled automotive strip, using a hydrogen-nitrogen blanketing atmosphere, where occasional surface oxidation defects have been traced back to moisture excursions in the gas supply.

Step 1 – Define the measurement objective

The target is continuous monitoring of moisture concentration in the blanketing gas entering each furnace zone, with an alarm threshold set in ppmv. Concentration (not dew point alone) is used to avoid pressure-dependent measurement errors—as pressure fluctuates during production, dew point readings become unreliable without constant pressure correction.

Step 2 – Select and install the sensor

TDLAS is selected for its tolerance to the furnace environment. A sample conditioning system is installed to draw gas from the blanketing gas feed line, filter particulates, and deliver a clean, conditioned sample to the analyzer.

Common mistake to avoid: Skipping adequate sample conditioning leads to sensor contamination and false readings. Proper filtration and heated sample lines are essential — without them, even a well-specified analyzer will produce unreliable results.

Step 3 – Integrate with process control and establish response protocols

The analyzer output (4-20mA or digital signal) is fed into the DCS/PLC for real-time trending. A high-moisture alarm triggers investigation of gas supply quality and, if confirmed, enables automatic adjustment or production hold, directly linking measurement to production action.

Without this integration, excursion events get logged but not acted on — and surface defects continue.

Step 4 – Validate, calibrate, and review

Ongoing measurement integrity depends on three practices:

- Validate periodically against a reference instrument (chilled mirror or calibration gas)

- Run regular sensor performance checks to catch drift early

- Review historical moisture data after any excursion event to identify root cause

In harsh industrial environments, calibration intervals of every 3-6 months are typical — significantly more frequent than laboratory settings require.

How Hammer-IMS Can Support Your Steel Operation

Hammer-IMS builds non-contact, non-nuclear measurement systems designed for demanding industrial environments, including steel production. Their M-Ray millimeter-wave technology delivers real-time measurement data without the regulatory burden, safety risks, or operational complexity that come with nuclear-based gauging.

Hammer-IMS systems integrate with Connectivity 3.0 software to feed measurement data directly into production control systems. This enables closed-loop process adjustment: the same immediate-feedback logic that makes continuous moisture monitoring effective applies equally to thickness, basis weight, and other quality parameters across the steel line. The result is tighter production margins and less material waste at the same time.

For steel producers evaluating this type of quality control technology, the core capabilities include:

- Contactless measurement across multiple parameters — moisture, thickness, and basis weight — on a single line

- Closed-loop integration via Connectivity 3.0 for automatic process correction without manual intervention

- Data logging and analytics for trend analysis and process documentation

- Non-radioactive technology that eliminates radiation licensing requirements and simplifies safety compliance

For facilities under pressure to tighten product consistency while cutting their environmental and compliance overhead, that combination of precision and clean-technology design is a practical place to start.

Frequently Asked Questions

What methods and units are used to measure moisture in the steel industry?

The main measurement methods include:

- Dew point analyzers (chilled mirror and capacitive/aluminum oxide)

- TDLAS (tunable diode laser absorption spectroscopy)

- Quartz crystal microbalance analyzers

- NIR/microwave sensors for solid raw materials

- Karl Fischer titration for laboratory validation

Common units are dew point temperature (°C/°F dp), ppmv for gas-phase moisture, and %MC for solid materials.

What types of moisture meters are used in the steel industry?

Common instruments include:

- Chilled mirror hygrometers

- Capacitive/aluminum oxide transmitters

- TDLAS analyzers

- Quartz crystal microbalance analyzers

- NIR or microwave sensors for solid raw materials

Selection depends on whether you're measuring gas-phase or solid-phase moisture and how demanding the operating environment is.

How much moisture can steel absorb?

Steel itself absorbs negligible moisture, but the key issue is moisture in the surrounding process environment. In gas form, water vapor in furnace atmospheres reacts with hot steel surfaces to form oxides. Even a few ppmv above threshold in blanketing gas can cause visible surface defects and mechanical property degradation.

What is dew point and why does it matter in steel heat treatment?

Dew point is the temperature at which water vapor in a gas begins to condense. In steel heat treatment, it is the primary indicator of atmospheric moisture. Maintaining the correct dew point—typically well below -40°C dp—prevents oxidation reactions and ensures bright, oxide-free surfaces.

How does moisture cause defects in steel products?

Moisture drives two defect mechanisms:

- Heat treatment: Water vapor reacts with hot steel to form surface oxide scale, degrading finish and coating adhesion.

- Casting: Dissolved hydrogen from moisture creates gas porosity in the solidified product, reducing mechanical strength and causing rejection.