Introduction

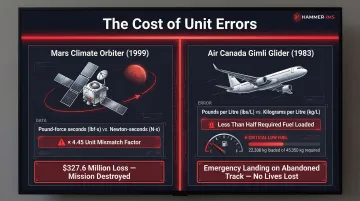

Picture a textile manufacturer in Belgium shipping coated fabric rolls to an automotive supplier in the United States. The Belgian production team measures coating thickness in micrometres, while the US customer's quality specification calls for mils. A simple conversion error — confusing 25 micrometres with 25 mils — results in material five times thicker than required, scrapping an entire production run. The same class of error reaches further than factory floors: NASA lost the $327.6 million Mars Climate Orbiter in 1999 when ground software provided thruster data in pound-force seconds instead of the specified newton-seconds, sending the spacecraft off course.

A measurement system is a structured collection of units and rules for quantifying physical properties — length, mass, time, temperature — consistently across organizations and borders. Without agreed-upon systems, global supply chains break down: specifications become ambiguous, quality control fails, and costly errors compound.

This article covers the three major measurement systems in use today (SI/metric, Imperial, and US Customary), the seven fundamental SI base units that underpin modern science and industry, and why measurement consistency directly determines manufacturing quality and profitability.

TLDR

- Measurement systems standardize how physical properties are quantified, enabling clear communication across borders and industries

- Three systems dominate: SI/metric (global standard), British Imperial (historical), and US Customary (primarily USA)

- SI builds on seven base units: metre, kilogram, second, ampere, kelvin, mole, and candela

- The US remains the primary exception to global SI adoption, creating conversion risks in international trade

- Hammer-IMS M-Ray technology outputs measurement data in both metric and imperial units, eliminating conversion errors at the source

What Is a Measurement System?

A measurement system provides a standardized framework of units (metres, kilograms, seconds) and the mathematical relationships between them. The core purpose is uniformity: ensuring that a kilogram measured in Belgium equals a kilogram measured in Japan, eliminating ambiguity when companies exchange specifications, verify quality, or certify products for international markets.

Units vs. Standards: A Critical Distinction

The International Vocabulary of Metrology (VIM) distinguishes between a unit and a measurement standard:

- Unit: The conceptual name of a quantity (e.g., "kilogram")

- Standard: The physical or scientific reference that defines that unit (e.g., a calibrated reference weight traceable to fundamental constants)

For industrial applications, this matters practically: your production scale displays values in the unit kilograms, but its accuracy depends on calibration against a standard traceable to recognized authorities like national metrology institutes.

Historical Evolution: Custom vs. Designed Systems

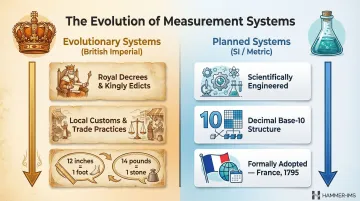

Measurement systems developed through two distinct paths:

- Evolutionary systems (British Imperial): grew organically from royal decrees, local customs, and trade practices, producing non-decimal conversions like 12 inches to a foot or 14 pounds to a stone.

- Planned systems (SI/metric): deliberately engineered using scientific principles and decimal structure, formally adopted by France on 7 April 1795 to replace fragmented regional measures with a unified, science-based framework.

The Major Measurement Systems Explained

While dozens of historical systems existed, three dominate modern industrial and commercial use: the International System of Units (SI/metric), the British Imperial system, and the US Customary system.

Metric System and SI

The metric system originated during the French Revolution on two founding principles: scientific observation (the original metre was one ten-millionth of the distance from the North Pole to the equator) and decimal structure (base-10). That base-10 structure makes conversions straightforward: 1,000 millimetres equal 1 metre; 1,000 grams equal 1 kilogram. This simplicity drove rapid global adoption.

The 11th General Conference on Weights and Measures (CGPM) formalized the modern metric system in October 1960, adopting the name "Système International d'Unités" (SI). Today, SI is the official measurement framework in 195 countries and underpins every major scientific discipline worldwide. Only three nations — the US, Myanmar, and Liberia — have not adopted metric as their primary system.

British Imperial System

Standardized through the Weights and Measures Act of 1824, the Imperial system is built on the foot-pound-second. Once used across the British Empire, it has been largely replaced by metric in commercial and scientific contexts. UK law now requires metric units for all trade, with only narrow statutory exceptions — road distances in miles and draught beer sold by the pint among them.

US Customary System

The US Customary system shares roots with Imperial but diverged significantly, particularly in volume and mass. NIST explicitly advises against calling the US system "Imperial" because of meaningful differences:

- US liquid gallon: 3.785 litres

- Imperial gallon: 4.546 litres (20% larger)

- US short ton: 907.2 kg (2,000 lb)

- Imperial long ton: 1,016 kg (2,240 lb)

The US remains the primary everyday and commercial user of this system, while scientific and medical contexts in the US typically use SI.

The 7 SI Base Units

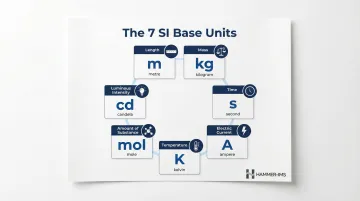

SI is built on seven fundamental, independently defined base units from which all other units (called derived units) can be expressed. Since the 2019 CGPM Resolution 1, these base units are defined using fixed values of fundamental physical constants rather than physical objects — allowing any calibrated laboratory worldwide to reproduce them without a physical reference artefact.

Core Industrial Units: Metre, Kilogram, Second

These three units underpin the vast majority of industrial measurement. In manufacturing contexts — sheet extrusion, textile coating, process timing — they appear in nearly every specification, quality check, and control system.

Metre (m) — length: Defined by fixing the speed of light in vacuum at exactly 299,792,458 m/s. On the production floor, metres govern sheet width, extrusion line dimensions, and material coverage across textile coating, plastic film, and nonwovens applications.

Kilogram (kg) — mass: Defined by fixing the Planck constant at exactly 6.62607015×10⁻³⁴ joule-seconds — replacing the physical platinum-iridium cylinder that served as the reference from 1889 to 2019. Industrial use spans material weight, grammage (g/m²), and batch quantities.

Second (s) — time: Defined by the caesium hyperfine frequency (9,192,631,770 cycles). Process cycle time, line speed in metres per minute, and sensor sampling rates all depend on the second — particularly in real-time quality control systems.

Key industrial applications at a glance:

- Length (m): Sheet width tolerances, web span, extrusion die gap

- Mass (kg): Basis weight (g/m²), batch loading, material yield

- Time (s): Line speed, sampling frequency, cycle monitoring

Electrical and Temperature Units: Ampere, Kelvin

For process engineers, these two units shift the focus from geometry and mass to the energy inputs that drive — and must be precisely controlled within — industrial systems.

Ampere (A) — electric current: Defined by fixing the elementary charge at exactly 1.602176634×10⁻¹⁹ coulombs. In manufacturing, amperes govern the electrical systems powering production equipment, control panels, and sensor networks.

Kelvin (K) — thermodynamic temperature: Defined by fixing the Boltzmann constant at exactly 1.380649×10⁻²³ joules per kelvin. Extrusion lines, curing ovens, and coating systems all depend on precise thermal management — even small deviations shift product specifications outside tolerance.

Specialised Units: Mole and Candela

These two units operate at the edges of typical industrial measurement. They matter in specific sectors but rarely appear in mechanical production monitoring.

Mole (mol) — amount of substance: Defined by fixing Avogadro's constant at exactly 6.02214076×10²³ entities per mole. Most relevant in chemical and pharmaceutical manufacturing; less common in mechanical or continuous-process industries.

Candela (cd) — luminous intensity: Defined by fixing the luminous efficacy of monochromatic 540 THz radiation at exactly 683 lumens per watt. Used in photometry and lighting system design.

Summary Table: The 7 SI Base Units

| Base Quantity | Unit Name | Symbol |

|---|---|---|

| Length | metre | m |

| Mass | kilogram | kg |

| Time | second | s |

| Electric current | ampere | A |

| Thermodynamic temperature | kelvin | K |

| Amount of substance | mole | mol |

| Luminous intensity | candela | cd |

Metric vs. Imperial vs. US Customary: A Quick Comparison

The three systems differ significantly across common measurement categories, creating serious risks when specifications cross borders.

Key Differences Across Common Measures

| Measurement Type | SI/Metric | British Imperial | US Customary |

|---|---|---|---|

| Length | metre (m) | foot, yard, mile | foot, yard, mile |

| Mass/Weight | kilogram (kg) | pound (lb), stone | pound (lb) |

| Volume (liquid) | litre (L) | Imperial gallon (4.546 L) | US gallon (3.785 L) |

| Volume (dry) | litre (L) | [abolished 1824] | US dry gallon (4.405 L) |

| Large mass | metric ton (1,000 kg) | long ton (1,016 kg) | short ton (907.2 kg) |

| Temperature | Celsius (°C), Kelvin (K) | Fahrenheit (°F) | Fahrenheit (°F) |

Critical practical point: An order for "1,000 gallons" of industrial solvent creates a 760-litre discrepancy depending on whether the supplier interprets it as Imperial (4,546 L total) or US gallons (3,785 L total). This 20% difference can halt production or violate safety specifications.

Real-World Consequences: Mars Climate Orbiter and Gimli Glider

Two incidents show what unit mismatches cost in practice:

- Mars Climate Orbiter (1999): Ground software sent thruster data in pound-force seconds; navigation software expected newton-seconds. The factor-of-4.45 error sent the spacecraft into Martian atmosphere at an unsurvivable altitude — a $327.6 million loss.

- Air Canada Flight 143 — the "Gimli Glider" (1983): Ground crews applied a pounds-per-litre conversion factor instead of kilograms per litre, loading less than half the required fuel. The Boeing 767 ran out mid-flight.

How International Standards Enforce SI Compliance

To reduce these risks, international standards bodies mandate SI across technical and commercial contexts:

- The ISO/IEC 80000 series establishes the International System of Quantities (ISQ) and requires SI units in scientific and educational documents

- ISO 9001 quality certifications and most international trade contracts specify SI measurements by default

- ASTM technical standards increasingly reference SI alongside or instead of US customary units

For manufacturers operating across borders — whether sourcing materials, exporting finished goods, or calibrating process equipment — working in SI units from the outset avoids the conversion errors that derail production.

Why Measurement Consistency Matters in Industry

In manufacturing—particularly continuous materials like textiles, nonwovens, plastic film, rubber, or foam—measurement consistency directly affects product quality and profitability.

Quality Impact: Small Deviations, Large Consequences

Small measurement deviations render entire production runs non-compliant. A plastic film specified at 25 micrometres thickness with ±2 micrometre tolerance requires enough precision to distinguish 23 micrometres (acceptable) from 22.5 micrometres (reject).

When inline sensors lack calibration or operators misread units, manufacturers ship out-of-spec material—triggering customer returns, production stoppages, and contract penalties.

Traceability to Recognized Standards

Two international standards govern how manufacturers must handle measurement traceability:

- ISO 9001 (Clause 7.1.5.2) requires an unbroken calibration chain back to SI units via national metrology institutes or accredited laboratories, validating quality data for audits and cross-border agreements.

- ISO/IEC 17025 mandates documented calibration certificates with explicit SI traceability for testing and calibration laboratories—without this chain, measurement results cannot support compliance claims.

Measurement Uncertainty: Quantifying Doubt

The Guide to the Expression of Uncertainty in Measurement (GUM) requires explicit statements of measurement uncertainty—the parameter characterizing dispersion of values that could reasonably be attributed to the measured property. Every measurement carries uncertainty from instrument calibration, environmental conditions (temperature, vibration), and operator variability. Minimising these sources of uncertainty—through calibrated instruments, controlled environments, and standardised procedures—is what separates reliable process control from guesswork.

Real-Time Measurement Reduces Waste

Shifting from offline sampling to real-time inline measurement drastically cuts material waste. In plastic film extrusion, manufacturers using manual methods typically operate at 3% safety margins to compensate for measurement lag. For a 2-metre wide line running 24/7, this margin translates to approximately 3 metric tons of daily waste—over 1,000 metric tons annually.

Reducing average film thickness from 0.8 mil to 0.7 mil through inline control yields 15% material savings. Closed-loop systems that automatically adjust process parameters based on real-time thickness data eliminate manual response lag, reducing scrap and allowing safer operation near minimum specification targets.

Hammer-IMS builds contactless measurement systems around M-Ray technology—electromagnetic millimetre-wave sensors—that deliver real-time, non-nuclear thickness and grammage data directly on the production line. At measurement speeds up to 3 kHz, these systems support line speeds of 500 metres per minute while feeding closed-loop process control with no production interruption.

How Modern Industrial Measurement Bridges the Gap

Modern industrial measurement instruments are designed to be system-agnostic, displaying results in SI units or configurable formats and outputting data that integrates with international standards regardless of regional measurement preferences.

Configurable Units and Data Integration

Advanced measurement platforms allow real-time data logging, unit configuration, and integration with production systems. Hammer-IMS's Connectivity 3.0 software, for example, supports:

- Communication via Modbus TCP/IP and TCP/IP over Ethernet protocols

- Data export to Microsoft SQL databases and FTP/SFTP servers

- Integration with Grafana dashboards for historical trend analysis

- Timestamped logs with batch identifiers and recipe parameters for ISO 9001 audit trails

This means measurements taken in a Belgian textile plant can be directly compared with specifications from US or Japanese customers without conversion ambiguity.

Common Language for Global Operations

For industries operating across multiple countries — automotive suppliers, nonwovens manufacturers, medical device producers — measurement systems that output SI natively reduce waste, prevent specification errors, and support global certification processes.

The practical stakes are real. When a German automotive supplier sends coating thickness specifications to a US tier-one manufacturer, both parties reference the same SI units (micrometres or millimetres). This shared language eliminates the 20% volume discrepancy inherent in gallon-based specifications and avoids the kind of conversion failure that destroyed the Mars Climate Orbiter.

Adopting SI-first engineering policies — backed by measurement platforms that output standardized data directly into production systems — removes one of the most persistent sources of preventable error in global manufacturing.

Frequently Asked Questions

What are the different systems of measurement?

The three primary systems in modern use are the International System of Units (SI/metric), the British Imperial system, and the US Customary system. SI is the globally dominant standard used in science, international trade, and most countries' everyday commerce.

What is the US measurement system called?

It is officially called the US Customary System. It shares historical roots with the British Imperial system but differs significantly in volume units (US gallon vs. Imperial gallon) and mass units (short ton vs. long ton), and remains the primary everyday system in the US — though scientific contexts default to SI.

What are the 7 SI base units?

The seven SI base units are: metre (length), kilogram (mass), second (time), ampere (electric current), kelvin (temperature), mole (amount of substance), and candela (luminous intensity). Every other SI unit is derived from combinations of these seven.

What is the difference between the metric system and the imperial system?

The metric system uses a decimal (base-10) structure, so conversions are straightforward — 1,000 millimetres equals 1 metre. The Imperial system uses non-decimal factors (12 inches to a foot, 14 pounds to a stone) and persists mainly in the UK for limited everyday applications.

Why is standardization of measurement systems important for global trade and industry?

Unit mismatches cause real damage — NASA's Mars Climate Orbiter was lost in 1999 due to a metric/Imperial mix-up costing $327.6 million. Standardization also underpins international quality certifications like ISO 9001 and ISO/IEC 17025, ensuring measurements are reliably interpreted across borders without conversion errors.

What is SI and who uses it?

SI (Système International d'Unités) is the modern international standard for measurement, formally adopted in 1960. It is used by virtually every country in the world for science, medicine, engineering, and international trade. The BIPM currently has 64 Member States and 36 Associate States participating in the Metre Convention.