Manufacturing remains a cornerstone of the U.S. economy. However, today’s challenges are giving renewed urgency to solutions that boost quality, reduce waste, and streamline operations.

In 2025, the U.S. manufacturing workforce stands at about 12.72 million employees. Meanwhile, manufacturing output continues to grow: as of August 2025, U.S. manufacturing production rose about 0.9% year-on-year.

At the same time, the sector faces persistent pressures. Many manufacturers confront labor shortages, rising labor costs, shrinking margins, increasingly strict quality standards, and rising demand for speed and flexibility.

That’s where machine vision systems come in. It helps manufacturers identify quality issues, measure product dimensions, monitor production processes, assist robotic operations, and comply with regulations.

This guide walks you step by step through how to implement a machine vision system in a manufacturing setting. Use it to inform a pilot project, shape a procurement decision, or build a long-term automation roadmap.

TL;DR:

Machine vision boosts quality, reduces waste, and enables consistent high-speed inspection, making it essential for modern U.S. manufacturing facing labor shortages.

Successful implementation depends on clear defect definitions, strong lighting design, real-world PoC testing, and early operator involvement to avoid early project failure.

A robust system requires the right cameras, optics, lighting, software, and precise integration with PLCs, robots, and MES platforms for reliability.

Thorough planning, feasibility analysis, ROI modeling, technical specifications, and cross-functional alignment ensure scalable adoption of machine vision across multiple lines and plants.

Machine vision isn’t ideal for visually undetectable defects, unpredictable environments, low volumes, or insufficient budgets, reinforcing the need for proper evaluation.

Understanding Machine Vision in Manufacturing

At its core, a machine vision system enables a production line to “see,” analyze, and act on visual information with speed and precision that far exceed human capability. For manufacturers facing labor shortages, rising quality expectations, and stricter regulatory requirements, machine vision has become one of the most practical and high-ROI automation investments.

What Machine Vision Systems Do

Machine vision systems automatically capture images of parts, components, or products and analyze them using algorithms, rule-based logic, or AI (such as deep learning). These systems can:

Inspect surfaces, assemblies, and packaging for defects

Verify labels, barcodes, and traceability data

Measure dimensions, tolerances, and geometric features

Guide robots or cobots for pick-and-place, assembly, or welding

Sort products based on color, shape, or quality

Detect presence/absence of components

In high-volume manufacturing environments, machine vision operates 24/7 with consistent accuracy, something manual inspection simply can’t match.

Also Read: M-Ray System for nonwoven basis weight: ITA Augsburg presents research

Core Components of a Machine Vision System

While individual setups vary based on the application, most systems include the following key components:

Cameras: Different camera types are chosen depending on product movement, inspection tolerance, and lighting conditions:

2D cameras for standard inspection and code reading

3D cameras for depth measurements, robot guidance, and surface profiling

Line-scan cameras for continuous items like paper, film, textiles, or metals

Optics: Lenses determine the field of view, depth of field, and imaging clarity. Industrial-grade optics ensure consistent performance in demanding environments.

Lighting: Lighting is often the most critical factor. Options include:

Bright-field and dark-field lighting

Backlighting

Structured light projection

Infrared and polarized lighting

Image Acquisition Hardware: Frame grabbers and industrial PCs capture and process images at millisecond speeds.

Processing Software: This software interprets the images, using tools such as:

Rule-based algorithms

Edge detection

Pattern matching

OCR/OCV (optical character recognition/verification)

Deep learning models

Integration with Factory Systems: Machine vision often integrates with:

Programmable Logic Controllers (PLCs)

Robots and cobots

Conveyors and sortation systems

MES/ERP platforms for data-driven decision making

Also Read: How Hammer-IMS contributes to a more sustainable and healthier industry

Machine Vision Lighting Guide: How to Make Defects "Pop"

Your entire vision system implementation depends on one thing: the quality of the image you capture. Even the best software, algorithms, or AI models cannot rescue a low-contrast, overexposed, or glare-filled image.

This is why an experienced vision system integrator invests most of their energy into lighting and optics, because choosing the right light is the difference between success and failure.

Below is a breakdown of the most common machine vision lighting techniques and where they deliver the best results.

Lighting Type | How It Works | Best For (Application) | Keywords |

|---|---|---|---|

Backlight | Light passes through the part to create a crisp silhouette. | Dimensional measurement, edge detection, and hole identification. | vision system lighting and optics |

Dome Light | Soft, diffuse illumination eliminates glare by lighting from all directions. | Shiny, reflective, or curved surfaces; eliminating hotspots. | Cognex integration |

Low-Angle Light | Light skims across the surface, exaggerating shadows and texture. | Scratches, surface defects, engravings, subtle texture changes. | Keyence vision integration |

Coaxial Light | Light travels along the same axis as the lens for uniform reflection. | Flat, reflective surfaces like glass, wafers, and polished metals. | vision system integrator |

Ring Light | LEDs arranged around the lens provide direct, even illumination. | General inspection, OCR/OCV, matte finish analysis. | Common vision system failures |

Pre-Implementation Planning: Your Project Success Blueprint

Successful machine vision projects don’t start with hardware. They start with clarity. Before selecting cameras, software, or integrators, U.S. manufacturers must evaluate their production environment, define measurable goals, and determine whether machine vision is the right fit for the application.

A thorough planning phase ensures the system delivers long-term ROI, remains scalable, and integrates seamlessly with existing automation.

1. Define the Manufacturing Problem Clearly

Begin by documenting the specific problem the vision system must solve. This step prevents scope creep and ensures technical decisions align with operational goals.

Key questions to answer:

What defect or failure are you trying to eliminate?

What process bottleneck is slowing throughput?

What quality, safety, or compliance requirement are you failing to meet?

Where are manual inspectors inconsistent or overloaded?

Which part(s) or product(s) must be analyzed?

Typical problem examples:

Missing components on PCB assemblies

Incorrect labels, barcodes, or date codes

Cosmetic imperfections in automotive plastics

Dimensional variations in machined parts

Contaminants in food or pharmaceutical packaging

Clearly defining the problem helps set realistic technical specifications later in the process.

2. Assess Application Feasibility

Not all applications are suitable for machine vision without adjustments. A feasibility assessment determines whether a vision solution can reliably detect features, defects, or patterns under real production conditions.

Key feasibility factors:

Lighting Conditions:

Glare, shadows, or reflections

Ambient light changes (day/night shifts, open factory bays)

Surface finish variations (matte vs. glossy)

Part Presentation:

Can the part be consistently oriented?

Do parts move too fast for stable imaging?

Is there vibration or inconsistent spacing?

Feature Visibility:

Are the defects visually detectable?

Is the feature too small for the required resolution?

Are edges distinguishable from background noise?

Environmental Factors:

Cleanroom requirements

Dust, oil, coolant spray

Temperature fluctuations

Available physical space for cameras and lighting

Accuracy & Speed Requirements:

Cycle time per part

Required measurement tolerances

Maximum allowable false rejects/accepts

A Proof-Of-Concept (POC) or feasibility test, conducted with sample parts and controlled lighting, is often the most reliable way to validate application fit.

3. Perform Cost-Benefit & ROI Analysis

Machine vision systems deliver fast ROI when properly aligned with business goals. U.S. manufacturers typically recover their investment within 6 to 24 months, depending on the application.

Core cost factors:

Cameras and optics

Lighting and control hardware

Industrial PCs or edge devices

Software licenses (rule-based or AI-driven)

Robotic/PLC integration

Mechanical fixtures

Engineering hours (design, validation, commissioning)

Operator training

Operational savings often include:

Reduced scrap and rework

Lower labor costs from reduced manual inspection

Increased throughput

Avoided compliance failures (FDA, FAA, automotive)

Decreased warranty claims and recalls

Quantifying ROI:

Manufacturers should estimate:

Cost per defective part before vision

Expected defect reduction percentage

Added production capacity from faster inspection

Labor hours saved per shift

A well-designed vision system typically pays for itself quickly, especially in high-volume U.S. manufacturing environments where even small scrap reductions add up.

4. Document Technical Requirements

Once feasibility and ROI are understood, document all performance expectations. This document becomes the foundation for design, vendor selection, and final validation.

Define measurable requirements such as:

Minimum defect size (e.g., 0.2 mm scratch)

Required resolution and field of view

Inspection rate (parts/minute or ft/min)

Acceptable false reject rate (e.g., < 1%)

Camera trigger requirements

Allowed system latency

Integration points with PLCs, robots, or MES

A clear technical specification speeds up quoting and ensures all vendors understand the expected performance.

5. Build a Cross-Functional Project Team

Machine vision touches multiple departments. Involving the right people early prevents misalignment later.

Recommended roles:

Quality engineers – define defect criteria

Manufacturing engineers – assess process constraints

Maintenance/automation teams – handle integration

IT team – oversee networking and data handling

Operations leaders – ensure production readiness

Safety officers – verify compliance

Cross-functional alignment improves adoption and ensures the system fits both operational needs and long-term automation plans.

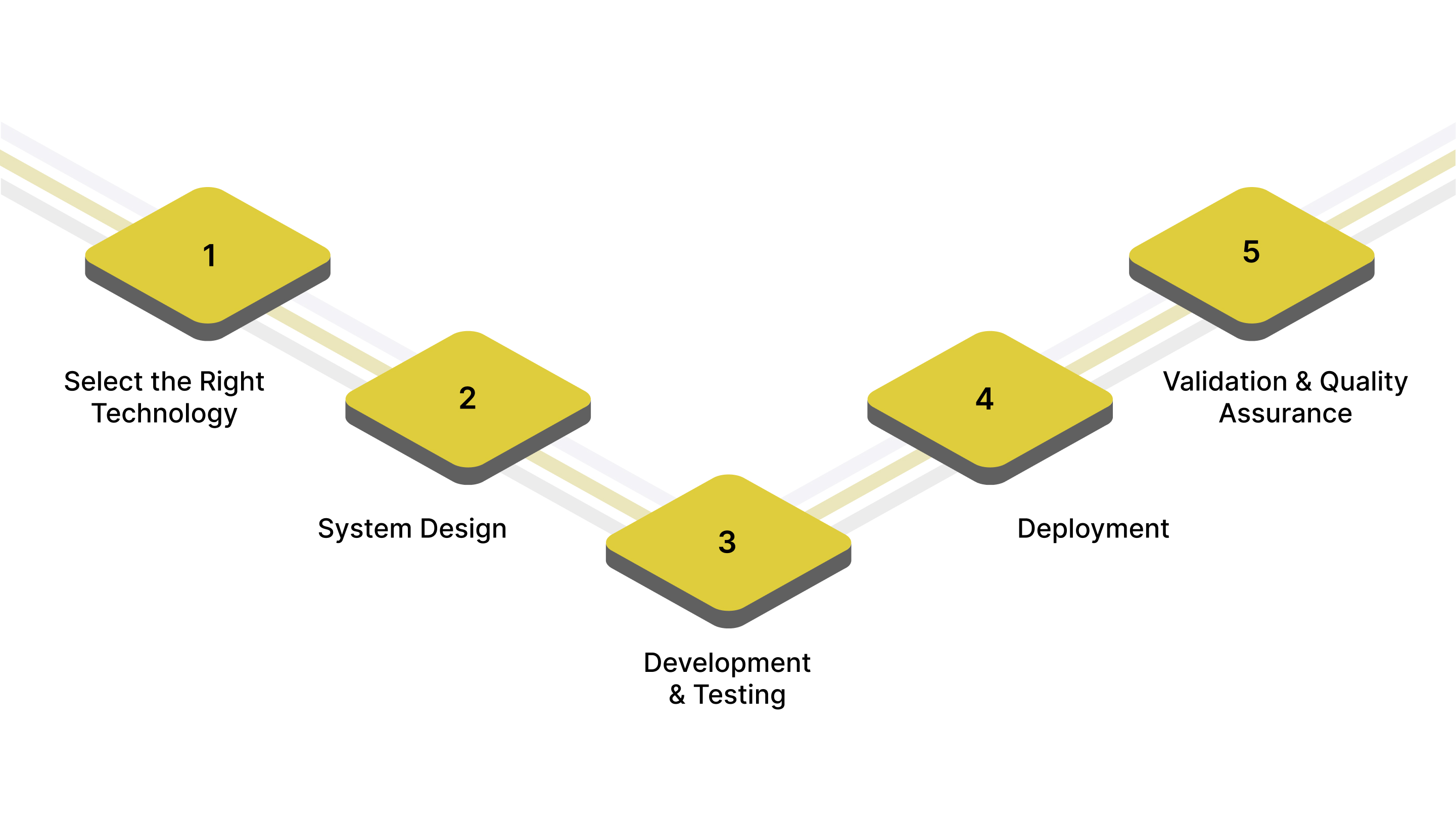

Step-by-Step Machine Vision Implementation Process

Implementing a machine vision system requires a structured approach that balances technical design, production realities, and long-term maintainability. The following step-by-step guide outlines the full journey, from selecting the right technology to deploying a validated system on the factory floor.

Step 1: Select the Right Technology

Choosing the appropriate technology is the foundation of a successful vision project. Your application requirements, environmental constraints, and accuracy expectations must drive the selection.

Key decisions include:

Camera Type:

2D cameras: For presence/absence, label checks, surface inspection.

3D cameras: For height, volume, deformation, and robot guidance.

Line-scan cameras: For continuous materials (paper, film, metals, textiles).

Resolution & Frame Rate: Depends on defect size, part speed, and field of view.

Higher resolution → finer detail

Higher frame rate → faster-moving lines

Lens Selection: Must match:

Working distance

Field of view

Required depth of field

Distortion limitations

Lighting Strategy: Lighting is often the most influential factor in vision performance. Choose based on surface properties:

Backlighting: Silhouettes, edge detection

Bright/Dark-field: Surface scratches, dents

Structured light: 3D surface mapping

Infrared/UV: Material penetration or fluorescence

Software Selection: Options include:

Rule-based platforms (pattern matching, blob analysis, OCR)

AI-based platforms (deep learning classification, segmentation)

Hybrid systems for maximum flexibility

Processing Location:

Edge processing: Real-time inspection, minimal latency

Cloud/hybrid: AI model training, cross-site analytics

A technology audit ensures your selection meets expectations for speed, accuracy, and reliability.

Step 2: System Design

Once the core technology is selected, the next step is building a robust system that fits your production line.

Mechanical Setup: Design stable and vibration-free mounting for:

Cameras

Lights

Triggers

Sensors

Enclosures (for dust, water, or environmental protection)

Part Presentation: Consistent part orientation is key. Design for:

Proper spacing

Reduced rotation or rolling

Controlled motion using guides, rails, or fixtures

Electrical & Control Integration: Ensure clean, synchronized signals between:

Cameras

PLCs

Encoders

Sensors

Robots or cobots

User Interface & Operator Access: Design operator-friendly HMI screens that allow:

Recipe selection

Pass/fail visualization

Real-time metrics

Fault messages

A thoughtful design stage eliminates downstream issues during deployment.

Step 3: Development & Testing

This phase transforms design concepts into a functional vision system and validates its performance using real production data.

Dataset Collection: Capture a representative range of:

Good samples

Defective samples

Borderline cases

Environmental variations

Algorithm Development: Develop:

Feature detection rules

Inspection logic

Deep learning models (if applicable)

Measurement tools

Stress Testing: Evaluate performance against:

Speed fluctuations

Part variations

Lighting changes

Vibration effects

Different shifts or operators

Iterative Optimization: Refine:

Camera angles

Lighting intensity

Processing thresholds

Trigger timing

AI model accuracy

A robust testing phase ensures production readiness.

Step 4: Deployment

Once validated, the system is installed and integrated into the live production environment.

Installation:

Mount all hardware

Calibrate cameras and lenses

Configure lighting geometry

Install protective housings

PLC / MES / Robot Integration: Define:

Trigger logic

Reject mechanisms

Alarms and interlocks

Data logging parameters

Communication protocols (EtherNet/IP, PROFINET, OPC UA)

Operator Training: Train operators on:

System start/stop

Changing recipes

Reading pass/fail indicators

Responding to alarms

Managing rejects

Initial Production Run: Conduct supervised runs to catch:

Drift in thresholds

Unexpected product variations

Mechanical interference

Deployment is complete when the system runs reliably without manual intervention.

Step 5: Validation & Quality Assurance

Validation ensures the system performs consistently under real production conditions.

Performance Metrics: Track:

Detection accuracy

False reject rate

False accept rate

Throughput impact

Uptime/availability

Calibration Procedures: Regular calibration is essential for:

Cameras

Lenses

Encoders

AI model updates (if required)

Compliance Documentation: For regulated industries (food, pharma, aerospace):

Validation protocols (IQ/OQ/PQ)

Audit logs

Image records

Traceability files

Continuous Improvement: Schedule ongoing reviews:

Add new defect categories

Update AI training sets

Improve lighting for shifting production conditions

Expand integration with MES dashboards

A well-monitored system continues to deliver ROI long after deployment.

Industry-Specific Applications

Different sectors use machine vision to address their unique requirements:

Automotive: High-stakes inspections of welds, sealants, and assemblies improve safety and reliability. Vision also supports VIN validation and component traceability.

Electronics: Systems analyze tiny components, assess solder joints, and perform 3D height checks to detect defects that could lead to failure in end-use applications.

Food & Beverage: Vision detects contamination, checks fill levels, monitors color consistency, and verifies expiration dates and lot codes for full traceability.

Medical & Pharmaceutical: Packaging validation, labeling accuracy, and device assembly checks ensure compliance and patient safety.

Paper & Pulp: Continuous web inspection catches tears, holes, and surface inconsistencies while monitoring color and basis weight for quality control.

Packaging: Vision confirms label presence and accuracy, inspects seals, and detects damage that could compromise product integrity.

Industrial & Construction: Systems verify heavy equipment components, inspect welds, measure construction materials, and assess surface quality to ensure compliance and structural reliability.

AI vs Traditional Vision Systems

Recognizing the distinctions between rule-based and AI-driven vision solutions helps manufacturers choose the right technology for each application.

Traditional Vision Systems

Conventional machine vision relies on predefined, rule-based algorithms that perform reliably when products and environmental conditions remain consistent. They excel in applications with stable part appearance, fixed positions, and clear inspection criteria.

These systems deliver predictable results and are easier to troubleshoot when problems occur. They also tend to deploy faster and require less processing power, making them a practical, cost-efficient choice for many standard inspection tasks.

AI-Based Vision Systems

AI-enabled systems use deep learning models that interpret complex patterns and adapt as new data becomes available. They are particularly effective in situations with high variability, such as natural materials, inconsistent lighting, or aesthetic quality checks, where rigid rules may fail or become cumbersome to maintain.

Key benefits of AI-based vision include:

Strong scalability for accommodating new product variants or evolving inspection needs

Flexibility to adjust to dynamic production environments

Improved accuracy in complex or subtle defect scenarios

Minimal programming effort for tasks involving nuanced or unpredictable features

AI vision is especially advantageous in high-speed, tight-tolerance operations where traditional systems might miss minor issues or produce excessive false positives.

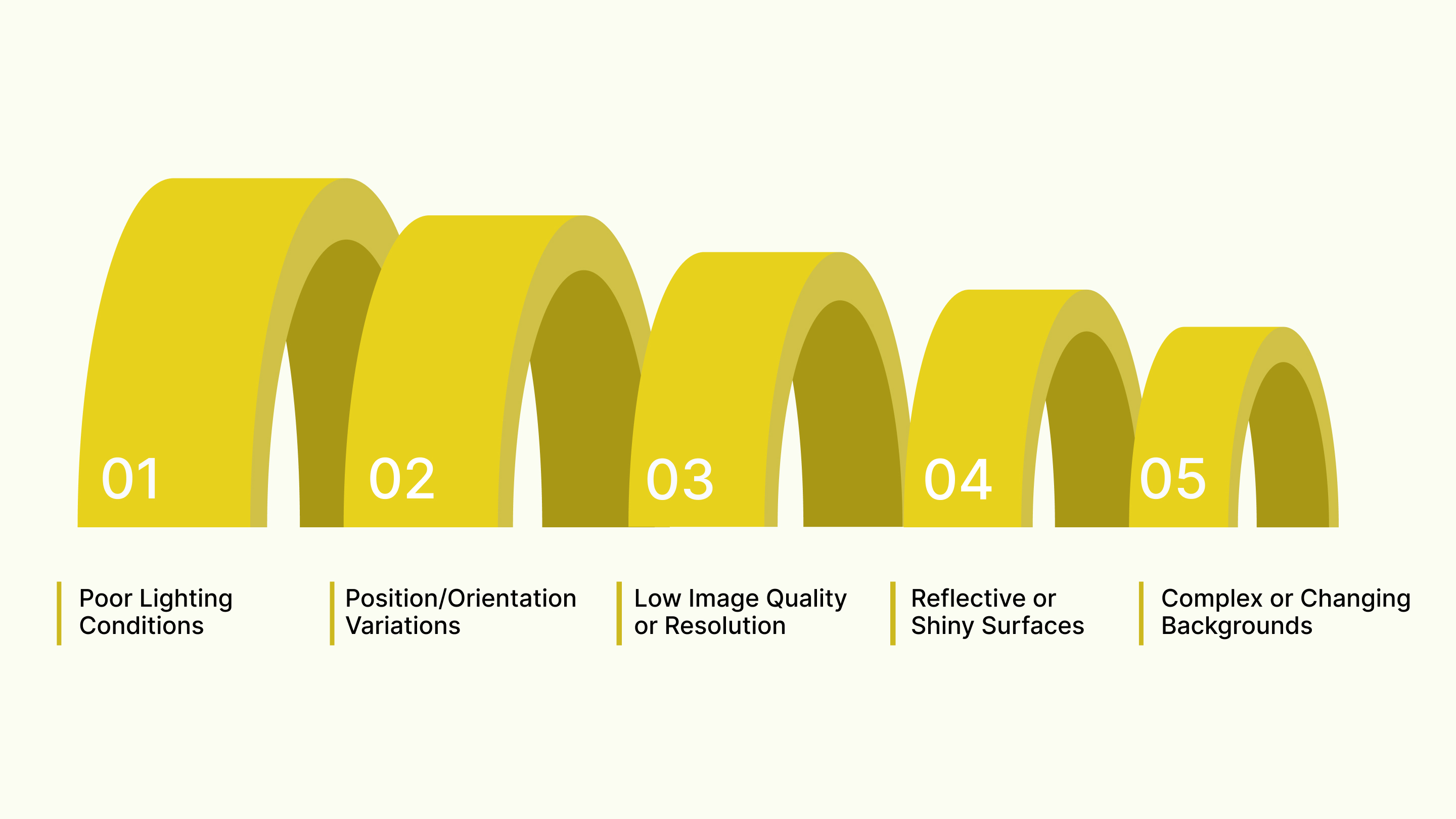

Challenges in the Implementation of Machine Vision Systems

Even the most advanced machine vision systems can underperform if real-world production factors are not properly addressed. U.S. manufacturers frequently encounter issues related to lighting, part variability, environmental conditions, and algorithm performance.

Understanding these challenges and how to mitigate them ensures long-term reliability, accuracy, and ROI.

Poor Lighting Conditions: Inconsistent or inadequate lighting reduces image clarity, causing detection errors. Use controlled illumination, diffusers, and consistent lighting setups for stable results.

Variations in Object Position or Orientation: Objects shifting position or angle confuse algorithms. Implement robust fixturing, guided alignment, or rotation-invariant vision models to ensure reliable detection.

Low Image Quality or Resolution Issues: Blurry or low-resolution images hinder accurate inspections. Select higher-resolution cameras, proper lenses, and maintain optimal focal distance for better clarity.

Reflective or Shiny Surfaces: Glare causes misreads and false detections. Use polarizing filters, angled lighting, or diffuse illumination to minimize reflections and improve accuracy.

Complex or Changing Backgrounds: Busy backgrounds disrupt segmentation. Use background subtraction, controlled environments, or AI-based detection models trained to ignore irrelevant patterns.

Overcoming these implementation challenges is essential for achieving consistent accuracy, dependable performance, and long-term ROI in machine vision systems.

How Hammer-IMS Can Help

Implementing a machine vision system is only as successful as the partner guiding you through it. Hammer-IMS brings a unique combination of advanced sensing technology, industrial expertise, and end-to-end project support that helps U.S. manufacturers deploy machine vision with confidence and long-term scalability.

Here’s how Hammer-IMS strengthens every stage of your machine vision implementation:

High-Precision, Non-Contact Measurement Technology: Specializes in Millimeter-Wave (MMW) non-contact measurement systems, delivering ultra-stable and highly accurate thickness, density, and material analysis.

Expertise in Complex & High-Speed Manufacturing Environments: From plastics and foams to composites, textiles, and nonwovens, Hammer-IMS equipment is engineered for demanding industrial conditions.

Seamless Integration Into Existing Production Lines: Designed for easy integration with PLCs, MES platforms, and industrial automation equipment.

Robust, Low-Maintenance Industrial Hardware: Built for long-term reliability with minimal calibration, low maintenance demands, and stable measurement performance.

Whether you're improving quality control, reducing material waste, or building a more automated production process, Hammer-IMS provides the advanced technology and engineering expertise needed to make your machine vision project a long-term success.

Conclusion

Implementing a machine vision system is a strategic requirement for manufacturers aiming to stay competitive. With rising pressure for higher quality, reduced defects, and greater operational efficiency, machine vision provides the accuracy, speed, and consistency that manual processes simply cannot match.

As U.S. manufacturing continues to expand through automation, digitalization, and advanced quality control technologies, businesses that invest in machine vision today will be better positioned for resilience, innovation, and sustained growth tomorrow.

If executed correctly, it becomes far more than an inspection tool. It becomes a core driver of smarter, safer, and more efficient manufacturing.

Ready to Elevate Your Quality Control with Machine Vision?

If you're planning to implement or upgrade a machine vision system, partnering with the right provider makes all the difference. Hammer-IMS helps manufacturers achieve higher accuracy, lower scrap rates, and more consistent production outcomes with advanced non-contact measurement technology and expert engineering support.

Take the next step toward smarter, more reliable manufacturing.

Request a consultation with Hammer-IMS.

Frequently Asked Questions (FAQs)

1. What is the implementation of computer vision?

Implementation of computer vision involves integrating cameras, algorithms, and software into workflows to automatically detect, analyze, and interpret visual information, improving accuracy, automation, and decision-making within industrial or digital systems.

2. What is the meaning of a vision system?

A vision system is a technology setup combining cameras, lighting, sensors, and image-processing software to capture, evaluate, and act on visual data for inspection, measurement, guidance, or automation tasks.

3. What skills are needed for computer vision?

Computer vision requires skills in programming, machine learning, mathematics, image processing, deep learning frameworks, data annotation, model optimization, and understanding camera systems, optics, lighting, and real-world deployment challenges.

4. What are the main components of a vision system?

A vision system includes cameras, lenses, lighting, image sensors, processing hardware, software algorithms, communication interfaces, and integration tools enabling accurate image capture, analysis, and automated decision-making in manufacturing or digital environments.

5. What are some examples of computer vision?

Examples include defect detection in manufacturing, facial recognition, barcode reading, autonomous vehicle perception, medical imaging analysis, object tracking, quality inspection, robotics guidance, and automated retail checkout systems.